Hardware Architecture

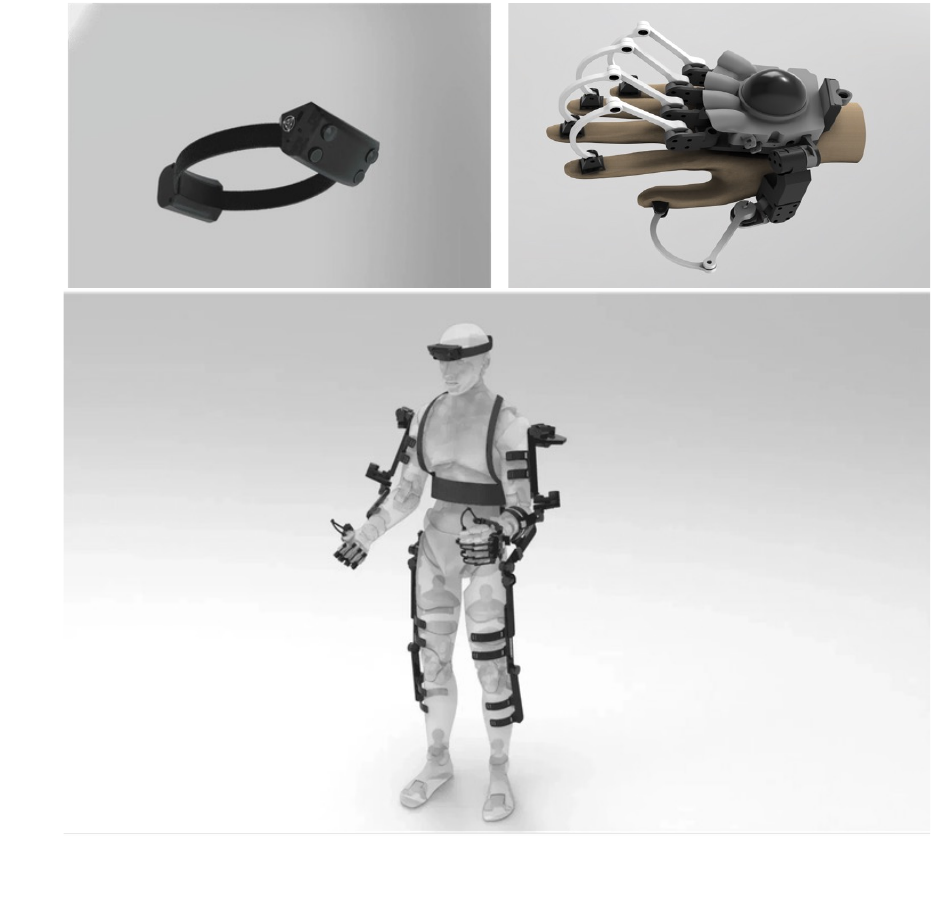

HumanEx Suite

A modular full-body data collection system for humanoid intelligence. From egocentric vision to whole-body locomotion, HumanEx captures the complete spectrum of human motion and perception.

Egocentric Vision

Simulates human visual perspective, capturing real attention patterns and interaction intent. Provides critical input for end-to-end models with stereo RGB and infrared cameras.

Modular Wearable

Lightweight head and hand units with integrated batteries and storage for wireless operation. Full-body exoskeleton supports extended wear. Flexibly adapts from head-only to whole-body configurations.

Multimodal Sync

Hardware-level temporal synchronization ensures timestamp alignment across head, hand, and full-body exoskeleton sensors with zero drift.

Basic Configuration

Egocentric Vision Unit

The head-mounted unit captures first-person visual data with dual stereo RGB cameras simulating binocular vision for depth perception, dual infrared cameras for precise hand tracking, and an integrated 6-axis IMU for head pose and motion trajectory recording. Its lightweight design with hot-swappable batteries enables comfortable, extended data collection sessions.

Tabletop Configuration

Head Unit + Dexterous Hand Exoskeleton

Combining first-person vision with bimanual dexterous manipulation, this configuration captures real-world task scenarios with joint-level precision tracking and force feedback. Multi-modal fusion positioning integrates IR, IMU, and hand recognition for high-accuracy hand localization. Integrated wrist-mounted batteries eliminate external power requirements.

Full-Body Exoskeleton

Built for Loco-Manipulation

A multi-sensor fusion system combining joint encoders and IMUs, purpose-built for whole-body locomotion and manipulation data collection. Physical measurement of absolute joint angles with zero cumulative drift, paired with high-frequency spatial attitude compensation.

Joint Encoders

Physical measurement of absolute angles, zero cumulative drift

IMU Sensors

Spatial attitude perception, high-frequency dynamic compensation

Forward Kinematics

Multi-modal data real-time alignment and skeletal chain computation

Full-Body Pose

High-precision, occlusion-free loco-manipulation data output

Occlusion-Free by Design

Physical exoskeleton directly measures joint angles without relying on visual features. Fundamentally immune to lighting changes and limb occlusion during complex manipulation tasks.

Unrestricted Mobility

Self-contained system design eliminates external base stations. Supports simultaneous large-range locomotion and fine manipulation for full-body coordinated data collection without spatial constraints.

Data Samples

From Collection to Deployment

Our system captures diverse manipulation and locomotion data across multiple scenarios — from tabletop assembly tasks to whole-body teleoperation of humanoid robots.

Data Validation

Four-Level Verification Pipeline

A multi-tiered validation system from hardware-level calibration to expert human review, ensuring every frame meets the highest quality standards for VLA model training. Our pipeline reduces data rejection rate to below 2%.

Hardware Calibration

- Camera intrinsic and multi-sensor extrinsic joint calibration

- Timestamp synchronization and spatial coordinate unification

- Zero-point calibration and environmental baseline confirmation before each session

Automated Screening

- Auto-rejection of severe motion blur, overexposure, or underexposure frames

- Filtering of abnormal jumps, discontinuous frames, and physically impossible motions

- Completeness checks ensuring no packet loss or truncation in multimodal streams

Algorithmic Verification

- Kinematic constraint validation of skeletal chain and joint angle consistency

- Simulation replay in physics engines (MuJoCo) for digital twin verification

- Contact detection algorithms evaluating end-effector environment interactions

Expert Review

- Semantic consistency checks verifying actions match task intent and logic

- Keyframe confirmation of critical interaction moments (grasp, release)

- High-ratio sampling quality assessment by domain experts

Through our four-level verification system, data rejection rate is reduced to < 2%, providing 100% trustworthy training data for VLA models.