Hi-WM: Human-in-the-World-Model for Scalable Robot Post-Training

Post-training is what turns a generalist robot policy into something that actually works. A model pre-trained on diverse manipulation data can grasp the broad strokes of robotic control, but deploying it in a specific environment — with its particular objects, lighting, and task requirements — almost always reveals failure modes that pre-training alone cannot address. The standard fix is human-in-the-loop correction: let the policy run, watch where it breaks, demonstrate the right behavior, and fine-tune. This works, but it is expensive. Every correction requires a physical robot, a prepared scene, a human operator, and a manual reset between episodes.

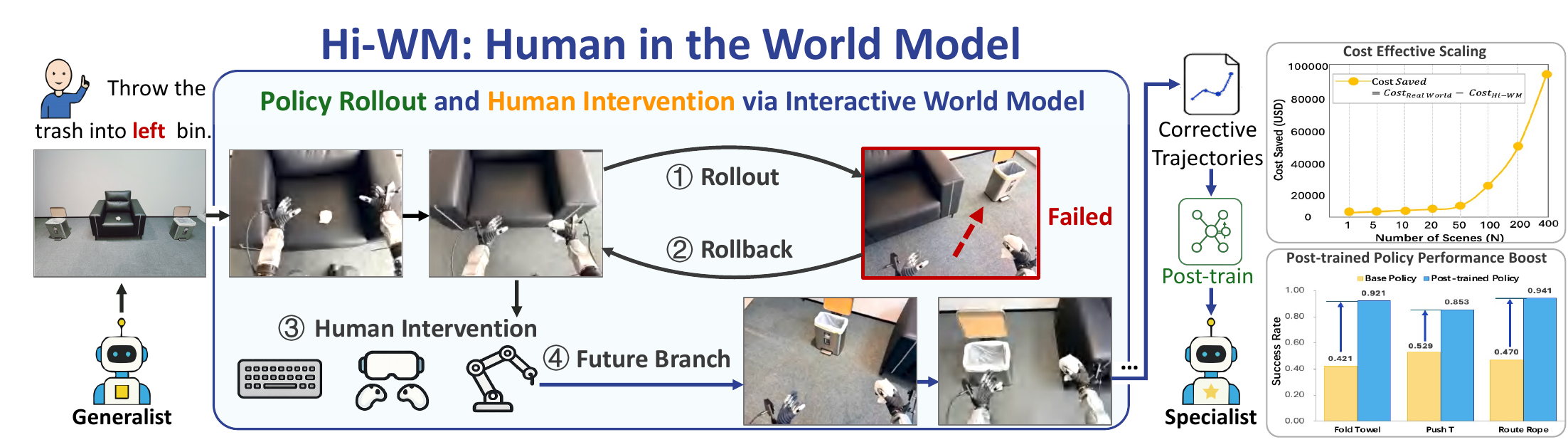

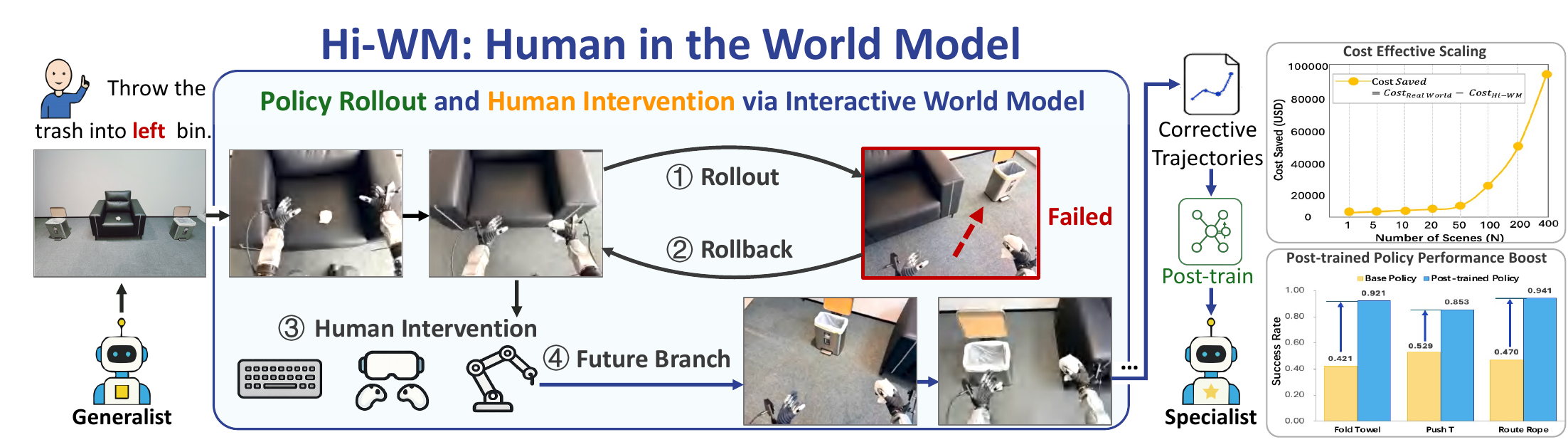

Hi-WM asks a simple question: what if the human could provide those corrections inside a learned world model instead of the physical world?

The World Model as a Corrective Workspace

The core idea of Hi-WM is to repurpose an action-conditioned world model — the kind typically used for imagination or evaluation — as an interactive workspace where human operators can intervene in policy rollouts. The policy runs inside the world model in closed loop: it observes a generated frame, predicts an action, and the world model produces the next frame. When the rollout begins to go wrong — a missed grasp, a misaligned push, an unstable fold — the human steps in and provides corrective actions directly through the world model.

This is not the same as generating synthetic demonstrations. The corrections are targeted: they happen precisely at the states where the current policy fails, which is where additional supervision is most valuable. And because the interaction happens in a learned model rather than the physical world, the process gains three properties that physical correction cannot offer.

Three Properties That Change the Economics

State caching and trajectory branching. When a policy fails at timestep t, the world model caches that state. The human provides one correction, but the system can then rewind to the same failure state and collect additional alternative corrections. A single failure becomes the seed for multiple recovery trajectories — dense supervision around exactly the behaviors the policy handles poorly. In physical execution, each correction is a one-shot event; in the world model, it can be multiplied.

Reset-free data collection. Physical post-training requires resetting the scene between episodes — placing objects back in their starting positions, re-calibrating the robot, clearing the workspace. In the world model, there is nothing to reset. The operator can move continuously from one rollout to the next, intervening where needed, without any downtime.

Hardware-agnostic remote collection. The human operator never touches the physical robot. Corrections can be provided through a keyboard, a VR controller, or a teleoperation arm — from anywhere with an internet connection. This decouples the correction process from hardware access and physical location, enabling parallel data collection across multiple operators.

Making the World Model Faithful Enough

For Hi-WM to work, the world model must be more than visually plausible — it must be action-faithful. If the model doesn't accurately reflect what happens when a specific action is executed, the corrections collected inside it won't transfer to the real robot. We address this through three training strategies.

First, we condition the model on the full 14-dimensional continuous action space (6-DoF end-effector pose plus gripper state for each of two arms), rather than projecting actions onto a simplified discrete space. Second, we include failure trajectories in training — missed grasps, misaligned pushes, off-task states — so the model learns to distinguish between actions that succeed and actions that fail, rather than defaulting to optimistic predictions. Third, we collect edge-case data near workspace boundaries and motion limits, where positioning errors are most likely to occur.

The result is a world model whose task-level predictions correlate strongly with real-world execution (Pearson r = 0.953), providing a reliable signal for both evaluation and intervention.

Real-World Results

We evaluate Hi-WM on three real-world manipulation tasks that span both rigid and deformable objects: folding a towel, pushing a T-shaped object to a target pose, and routing a rope through designated slots. Each task is tested with two policy backbones — Diffusion Policy (DP) and π0.

| Method | Policy | Fold Towel | Push-T | Route Rope |

|---|---|---|---|---|

| Base Policy | DP | 42.1% | 52.9% | 47.0% |

| π₀ | 55.3% | 76.5% | 64.7% | |

| WM Closed-Loop | DP | 76.3% | 64.7% | 70.6% |

| π₀ | 78.9% | 79.4% | 82.4% | |

| Hi-WM (Ours) | DP | 92.1% | 85.3% | 94.1% |

| π₀ | 97.4% | 97.1% | 100.0% |

Hi-WM improves real-world success by an average of 37.9 percentage points over the base policy and 19.0 points over the world-model closed-loop baseline. The closed-loop baseline — which generates additional successful rollouts from the current policy without human correction — provides a useful control: it shows that simply adding more of the same kind of data helps, but targeted human correction at failure states helps substantially more.

Scaling and Cost

The economic argument for Hi-WM strengthens as the number of deployment scenes grows. Each additional scene in physical post-training requires robot time, scene preparation, and operator presence. In the world model, the marginal cost of a new scene is the cost of initializing the model with a new observation — essentially zero hardware cost. At the largest scale we evaluated, replacing physical correction with Hi-WM yields nearly $100,000 USD in savings.

Performance also scales with data volume. Adding corrective data equal to 20% of the original training set improves success rates across all three tasks; increasing to 35% yields further gains. The improvements are monotonic within the tested range, suggesting that the world model has not yet saturated as a source of useful corrective supervision.

Generalization to New Scenes

A natural concern is whether corrections collected in the world model transfer to real-world conditions that differ from the training distribution. We test this on Push-T under three controlled variations: appearance changes (different object colors), background changes (different tabletop surfaces), and distractor objects (additional objects in the scene). In all three settings, the post-trained policy consistently outperforms the base policy, indicating that corrective data from the world model improves robustness to held-out scene variations.

Looking Forward

Hi-WM suggests that the role of world models in robotics extends beyond imagination and evaluation. A sufficiently faithful world model can serve as a reusable corrective substrate — a sandbox where the most expensive part of robot development (targeted human supervision) can happen without the constraints of physical hardware. Combined with our evaluation work on dWorldEval, this points toward a development workflow where world models mediate most of the iteration cycle, and physical deployment is reserved for final validation.

For more details, see the full paper at hi-wm.github.io. If you're interested in working on world models for robotics, we're hiring — get in touch.